Will Artificial Intelligence be Used to Politicize Science?

Musings on AI and Scientific Integrity

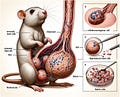

Frontiers in Cell and Developmental Biology is a reputable journal. So, it was surprising to scientists to see the above image make it through the peer-review process. That’s because there are multiple issues with the above image. One being the clearly distorted and inaccurate anatomy of a male rat. The other is that the image is filled with meaningless gibberish such as “stemm cells” and “dck.”

As noted in the caption above, this image was created with generative artificial intelligence software, made it through a peer-review process, and was published in a reputable scientific journal during February 2024. Frontiers has since retracted the paper. In a statement on how this got through the peer review process, Frontiers said that a reviewer did note issues with the image, but the authors of the paper did not address them. Frontiers said it was going to investigate how their process allowed the reviewer’s concerns to be overlooked.

AI and the peer-review process

The peer-review process is a hallmark of science. Scientific experts who work in the same, or similar, fields of research review each other’s work and critique it. Two to three reviewers provide feedback and comments to researchers on draft papers that detail their methods, data analyses, and findings. The point of the process is to ensure that results and conclusions made by researchers are sufficiently supported by their methods, data analyses, and logic. If reviewers come to the conclusion that the scientist’s results are not supported by their research, then their work is not published.

The peer-review system is not perfect. Every year thousands of papers are retracted. In 2023, a landmark year for retractions, more than 10,000 papers were retracted from scientific journals. Shoddy science makes it through the peer-review system and there also is peer-review fraud which can involve self-review or researcher’s nominating fake reviewers.

Generative artificial intelligence (AI), deep-learning models that can generate high-quality text, images, and other content based on the data they were trained on, poses additional challenges to the peer-review system. As demonstrated in the Frontiers case, AI tools could promote inaccurate findings. If an image like the one above can make it through the peer-review system, imagine an image only slightly distorted - one where the error is maybe not glaringly apparent.

Researchers are concerned by deception via AI in science. And rightly so - such deception could very well have scientists following up on a published result that was based on a lie generated by AI. That’s a waste of money and time. Another concern is that policymakers utilize AI generated falsified data - this could have significant repercussions for public health protections, for example. Increased cases of research misconduct involving AI could also contribute to the public’s distrust in science and science-informed policy.

Perhaps the larger concern is that AI breaks the peer-review system entirely, at least as we currently know it. If scientists cannot discern AI generated scientific fraud from real science, is the peer-review system still adequate? The answer to that question is another story for another day because today’s story is about AI and scientific integrity. So, why did I take you through this journey on research misconduct involving AI? To illustrate that what you’re seeing in the news on this issue is mostly about AI and research integrity, not scientific integrity.

Research Integrity ≠ Scientific Integrity

The above story of the retracted Frontiers paper is an issue of research integrity, not scientific integrity. Such context is important for this post, but likely others SciLight will publish in the future.

What is the difference between the two? The purpose for the misconduct.

Researchers may attempt to publish, sometimes successfully, findings that are based on falsified data, distorted images, or plagiarized results. The motives for research misconduct are varied, but often involve pressure to publish research in reputable journals to further one’s career. Publications, especially those made in high-impact factor journals, come with notoriety, financial gains, or tenure at a university.

Contrast the above with a hypothetical case where a federal agency political appointee instructs scientists to cherry pick scientific literature so that a government determination on an endangered species listing results in the species not being listed (cherry picking science has certainly happened in listing decisions before). An investigation reveals that the political appointee has been receiving emails from a large corporation that has plans to build a facility on land in the now delisted species remaining habitat - critical habitat that would have been protected from development if the species was listed as endangered. In these emails, the political appointee is sympathetic to the corporation’s concern that their finances will be negatively impacted if they cannot build their facility in this particular area. The political appointee also has demonstrated through prior work that they are not a fan of the Endangered Species Act. The political appointee tells the corporation that they’ll ensure the species isn’t listed as endangered.

Scientific integrity violations are never as clear as the one articulated in the hypothetical case above. But the point I want to demonstrate with the hypothetical is that the motive for the scientific misconduct here is political. The political appointee wants the policy decision aligned with their ideology and so they sideline the science - in this case by cherry picking certain scientific literature so that a policy determination swings in a specific direction (not listing a species).

While political officials are typically the source of scientific integrity violations - this is not always the case. For example, a pharmacist purposefully destroyed 500 COVID-19 vaccines in New York because of political disinformation about the safety of these vaccines. The motive of the pharmacist’s sidelining of science was political.

Scientific integrity, as SciLight will be using this term, will always refer to the politicization of science for political purposes.

AI & Scientific Integrity

So, will AI be used as a tool to politicize science? It certainly could be and we can imagine how from past scientific integrity violations.

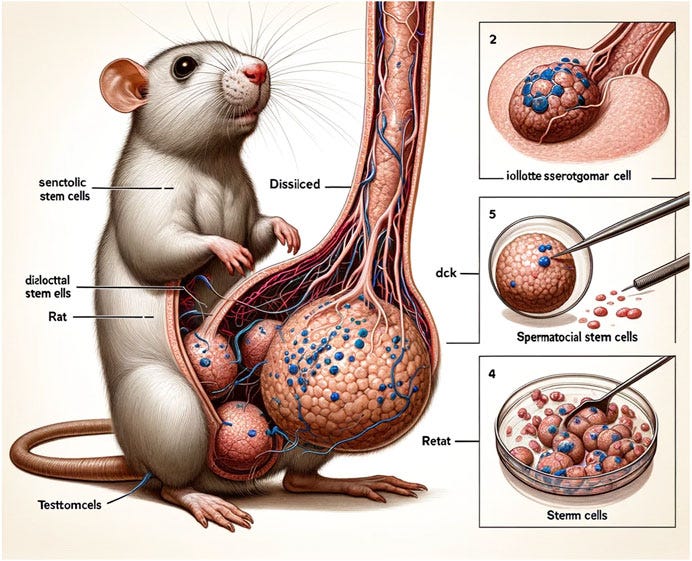

Sharpiegate is an infamous example of a scientific integrity violation. It began with a tweet from former President Trump on September 1st, 2019 stating that Alabama would be impacted by Hurricane Dorian. This was misinformation because none of the forecasting models at this point showed Alabama in the path of the hurricane. In an attempt to combat this misinformation from former President Trump, and to calm many Alabama residents worried for their lives and the lives of loved ones, Birmingham National Weather Service tweeted that Alabama was not in the path of the hurricane. A rebuttal to the president’s take.

So, on September 4, 2019, former President Trump displayed the National Hurricane Center's August 29 diagram of Dorian's projected track, but with an odd misshapen sharpie line. The sharpie line extended the hurricane’s possible path into southern Alabama. Trump later tweeted that “almost all models” showed Hurricane Dorian hitting Alabama further committing to spreading misinformation. And on September 6, 2019, the National Oceanic and Atmospheric Administration (NOAA) disavowed the Birmingham National Weather Service in favor of Trump’s misinformation. A later formal investigation found that agency science was politicized - a violation of scientific integrity.

One of the reasons the politicization of science was so clear in this case, is because of the sharpie marker. I mean, you can clearly see the sharpie marker line in the image above. But imagine this same scenario with AI involved - a situation where it might be more difficult to discern if a hurricane map has been doctored. What happens then? Does the government start emergency preparedness procedures to deal with hurricane relief in an area where there will be no damage? Imagine the taxpayer dollars that would’ve been wasted had our government took action based on an AI generated map with a falsified hurricane path. Additionally, imagine the confusion among agencies who would have to scramble to figure out exactly what is going on.

Our government is not prepared on AI and SI

I feel that our government is not fully prepared to deal with scientific integrity issues that will arise from the use of AI. Federal agencies are updating their scientific integrity policies or developing new policies if they didn’t exist before. But I have not seen any policy details on what AI means for scientific integrity, examples of what scientific integrity violations could arise from the use of AI, or steps that agencies might take to prevent these kinds of scientific integrity violations.

Inclusion of artificial intelligence in scientific integrity policies is sparse and do not provide a great amount of detail. For example, the provision on artificial intelligence in the Office of Science and Technology Policy’s scientific integrity policy reads: “Scientific integrity policies and practices must evolve as the Federal Government develops and uses new technologies, such as artificial intelligence, in order to provide for efficacy, accountability, and equity in the specific context of use.” In the Environmental Protection Agency’s updated policy, a provision on AI states, “Ensure that artificial intelligence tools are used consistent with Agency and Federal government policy and care should be taken that any future permitted uses are closely monitored to be sure they do not violate this Policy, for example as concerns authorship and attribution.”

By pointing out the lack of detail on AI and scientific integrity, I do not blame agencies for not knowing fully how to handle the issue. It is a new and evolving issue. But I do want to call attention to an issue that could have very serious repercussions. I hope the National Science and Technology Committe’s Subcommittee on Scientific Integrity is able to discuss this issue at length and develop some guardrails.

In some cases, it’s already difficult enough to uncover scientific integrity violations. If sharpie markers are replaced with images generated by computers - we might all have a difficult time weathering that political storm.